|

|||||||||||

| |

||||||||||||||

|

||||||||||||||

| |

||||||||||||||

A wearable computer is a computing device small and light enough

to be worn on one's body without causing discomfort. Unlike a laptop

or a palmtop , wearable computer is constantly turned on and interacts

with the

a real-world task.

Information could be even very context sensitive.

A wearable computer is a computing device small and light enough

to be worn on one's body without causing discomfort. Unlike a laptop

or a palmtop , wearable computer is constantly turned on and interacts

with the

a real-world task.

Information could be even very context sensitive.

The system gathers real world information through a well-defined API. The current implementation includes keyboard input, network input, speech recognition input, video camera input, G.P.S. input and infrared input. This stage helps in connecting devices on the fly, and provides a device independent abstract layer. Any necessary pre-processing of the data is done in the next stage.

The core of the system contains a basic natural language processor, which performs sentence translations. This converts a sentence into a command stream from which two pieces of information are extracted, which service to invoke and how the output should be rendered. A service manager is responsible for the instantiationand monitoring of the services. The service manager also checks and queues commands to provide resilience against system failures.

The output stage takes a modal neutral result from a service and makes a decision on how to render the information. The decision is made based on two criteria, what the user has asked for, and how the system perceives the users current context/environment.

If the user has asked to be shown a piece of information, this implies a visual rendition. If the system detects that the user is moving or busy with an activity (through the input sensors), an assumption can be made that theuser attention might be distracted if results are displayed in front of him (Imagine what would happen if the user was driving)! In this case the system will override the users request and would redirect the results to a more suitable renderer, such as speech.

Augmented Reality

Wearable computing introduces new concepts ‘mediated reality’ and

‘augmented reality’, which are very interesting to know about.

Mediated reality refers to encapsulation of the user's senses by incorporating

the computer with the user's perceptive mechanisms, and is used to process the

outside stimuli. For example, one can mediate their vision by applying a computer-controlled

camera to enhance it. The primary activity of mediated reality is direct interaction

with the computer, which means that computer is "in charge" of processing and

presenting the reality to the user.

Augmented Reality

combines real world scenes and virtual scenes, augmenting the real world

with additional information. The computer must be able to operate in the background,

providing enough resources to enhance but not replace the user's primary experience

of reality. This can be achieved by using tracked see-through display units

and earphones to overlay visual and audio material on real objects. This technology

adds value to the human knowledge, memory & intelligence.

An example of an AR application is a guidebook as above. As the tourist walks

around the library, his wearable computer uses sensors, for example a combination

of GPS and head tracking equipment, to detect his physical position and orientation.

Some text describing the library is shown on the display unit over the actual

building. The wearable computer assists further in enhancing the value of the

real world experience, using augmented reality.

Display Systems

The output device of a wearable computer could be either a head-mounted display

(HMD) unit with an earpiece or

only the earpiece for some applications. Though there could be several other

display devices intended for specific applications, HMD systems are of interest

in the conversation of wearable computers.

There are two different

types of HMD systems. The first one, intended for industrial or regular use

will have a see-through lens and a small projection system. Only on need basis,

the processing system may project the output data onto the lens. The projection

usually happens only on one of the lenses and the other lens remains free for

clear vision.

The second type of head-mounted display is of blocking type and requires the

full-attention of the user. This is mostly for 3D modeling, used for understanding

complex mechanical design systems or for personal entertainment requirements.

The HMD systems shown in these pictures have both the earpiece and the mouthpiece

built into them. The HMD systems are already well deep into the development

cycle as of today, and do support several attractive features like wireless

connectivity, external connectors for audio & video, and control settings.

The second type of head-mounted display is of blocking type and requires the

full-attention of the user. This is mostly for 3D modeling, used for understanding

complex mechanical design systems or for personal entertainment requirements.

The HMD systems shown in these pictures have both the earpiece and the mouthpiece

built into them. The HMD systems are already well deep into the development

cycle as of today, and do support several attractive features like wireless

connectivity, external connectors for audio & video, and control settings.

Input Devices

By now, you would

probably be having a fair understanding of the criteria of selection for input

devices for WCs. But there is no holy grail for an input device of a wearable

computer.

Speech recognition may appear as the most suited input

device, but may not be preferred in all kinds of applications & environments,

due to privacy and performance issues.

Handwriting & Keyboard could be one of the most efficient input devices,

provided the input device is not too small or awkward. Research in this wearable

domain is resulting in combination products like the SenseBoard shown in picture.

This device is just worn on the hands or wrists and senses the typing input

or handwriting. This does not have any cables and communicates on infrared.

Gesture Input

devices are simple, compact, and optimized for wearable use. These devices

receive inputs from the natural gestures. Ubi-Finger is such a device, but has

not been tested for all types of applications.

But the point to

be taken is that the user needs to be open-minded and adaptable to the emerging

input devices, in order to find the best combination.

Thumb

Typing - Carsten Mehring, a mechanical engineer at the University of California,

Irvine, has come up with a device that turns your hands into a qwerty-style

keyboard. Mehrings device uses six conductive contacts on each thumbthree

on the front and three on the backto represent a keyboards three lettered

rows. Contacts on the tips of the remaining eight fingers represent its columns.

Touching the right index finger to the middle contact on the front of the right

thumb, for instance, generates a j. The top contact on the thumb yields a u,

while the middle contact on the back of the thumb would produce an h. Mehring

says the similarity to typing makes his input device easier to master than others

that require an entirely different set of motions. He has applied for a patent

and hopes to market a product by year-end of 2002.

Thumb

Typing - Carsten Mehring, a mechanical engineer at the University of California,

Irvine, has come up with a device that turns your hands into a qwerty-style

keyboard. Mehrings device uses six conductive contacts on each thumbthree

on the front and three on the backto represent a keyboards three lettered

rows. Contacts on the tips of the remaining eight fingers represent its columns.

Touching the right index finger to the middle contact on the front of the right

thumb, for instance, generates a j. The top contact on the thumb yields a u,

while the middle contact on the back of the thumb would produce an h. Mehring

says the similarity to typing makes his input device easier to master than others

that require an entirely different set of motions. He has applied for a patent

and hopes to market a product by year-end of 2002.

Networks

We need to discuss

two different kinds of networks in reference to a wearable computer. One is

to connect the device to the external world and the other is to interconnect

the various components, the later one being new for wearable computers.

The first issue of

connecting to the WC to the external world has several choices; WAP, or Cellular

Digital packet data. This aspect of networking is not specific for a wearable

computer, and can evolve over time, from other electronic gadgets.

The second issue

of interconnecting the various parts of the WC, may involve both wired and wireless

connections. CPU, storage unit and similar peripherals will be connected with

or without cables to the wearable motherboard, which is a garment with (physically)

flexible bus and standard expansion slots. Peripherals like HMD and wrist/finger

worn devices may use standard wireless connections like Bluetooth.

There could also

be a third type of communication, two wearable computers talking to each other.

This near field networking could be on infrared (IrDA or IRX) or radio based

systems, to solve a need, which will invariably arise to exchange information

between two users.

Power sources

Batteries add size,

weight, and inconvenience to wearable computers. There are several ways of harnessing

the energy expended during the user's everyday actions to generate power for

one’s computer, thus eliminating the impediment of batteries. However,

there is no stopping to use to any of the miniature batteries, for example Lithium,

Li-MnO2, Li-C, that are currently being used in electronic gadgets.

Body Bus

Tom Zimmerman of MIT has shown that the non-contact coupling between the user’s

body and weak electric fields can be used to create and sense tiny nano-amp

currents in the user’s body. Modulating these signals creates Body Net,

a personal-area network that communicates through the skin. Using roughly the

same voltage and frequencies as audio transmissions, this will be as safe as

wearing a pair of headphones.

Keeping data in the human body avoids the intrusion of wires, the need for an

optical path for infrared, and conventional problems such as regulation and

eavesdropping.

Your shoe computer

can talk to a wrist display and keyboard and heads up glasses. Activating your

body means that everything you touch is potentially digital. A handshake becomes

an exchange of digital business cards, a friendly arm on the shoulder provides

helpful data, touching a doorknob verifies your identity, and picking up a phone

downloads your numbers and voice signature for faithful speech recognition.

Every emerging discipline

in computing is born first as a theoretical approach, almost a dream in the

mind of the inventor. Some of such theories have clear practical ramifications,

such as encryption algorithms. Others, on the other hand, take some time to

evolve from the concepts on paper to something that can be applied in the real

world. This could be one such example.

Challenges

& Limitations in Wearable Technologies

The biggest challenge in wearable systems is to identify effective interaction modalities for wearable computers. Development of the software for wearable computers which accurately models the common user tasks is probably the most significant challenges faced by wearable system designers.

The other software challenges include integration of information repositories that augment limited device capabilities. Consider a scenario where a streaming video is augmenting the user's visual data coupled with with cross-domain indexing and data correlation.

Coming to the discussion of current limitations on hardware technologies for wearable computing, there are four major problems power, networking, privacy and interface. Adding more features to the wearable device requires more power and generates more heat. This imposes a restriction to design systems that take little power and little space and last a long time."

To explain the second limitation with networking in wearable devices, first we need to understand that networking may never be truly ubiquitous; there will always be places where access to the Internet will not be simply be available. The inter-component communication using the on-body wireless bus (an internal electrical pathway along which signals are sent from one part of the computer to another) is still an area of research.

Privacy is not yet a limitation, but may be a limitation in future. Wearable computers allow you to have access to information that you normally wouldnt have. According to Dr starner, one can record conversations, keep personal notes, schedule, and use diary on the wearable. The first part of the privacy is to protect one's own personal information and the second part is to prevent the wearable user from stealing other's information. With massive amounts of information available right on your eyes, the user is expected to use the device judiciously.

The last, the interesting and the most haunting problem is "How do we communicate with the computer and how does it communicate to us?". Which is the most effective way of communciating to the device and back to the human? What are the prime Ergonomics issues and what makes the device more convenient? This answer for this would only evolve along the time, and there may not be any perfect answer to this question.

Applications

of Wearable Computing

something useful with this thing?" Perhaps the strongest reason

one should respond

with "Many things

" and "Yes, you can!" lies in the origin of wearable computing.

something useful with this thing?" Perhaps the strongest reason

one should respond

with "Many things

" and "Yes, you can!" lies in the origin of wearable computing.

The adjacent photograph

shows a real-life example of a mining engineer doing a chemical analysis of

a sample live on the field. Time is not very far to see them from coming into

as consumer appliances as well.

Privacy & Health Issues

Though wearable computing

does not raise any new privacy issues, it is true that the most useful information

is also most personal. Just because the wearable computers can be used for surveillance

purposes, does not mean that they are being used.

Wearable computing

does not involve any privacy issues which otherwise cannot be done. This just

helps getting around with what you mostly want, what you mostly do, what you

mostly like to do anywhere and everywhere.

Health and Safety

considerations will be important when one is wearing these things all his waking

hours (and arguably sleeping hours too!). Remember the Carpal Tunnel Syndrome?

Last but not the least, the resulting outfits shall be fashionable and provide

the buyer with a choice of fashions. After all, one doesn’t want to go

to a formal dinner looking like C3PO.

Conclusion

We have all the technologies

needed to make a viable wearable computer today. Lot of research and experiments

for practical & commercial use of WC are going on around the world. Several

varieties of WCs are indeed commercially available, but as of now most of them

are tailor made for specific applications. It is only a matter of time before

the consumer community accepts the idea, manufactures pick up patron ship and

the Catch-22 cycle of mass-production.

The paradigm shift

that the WC will bring; computer working along with you instead of you working

at the computer, will have similar impact to the paradigm shift brought by the

earlier PCs. It will augment the user’s senses, intellect, memory and

provide him with huge amount of computation power and information (both local

and networked), without interfering from what he doing. Unlike Artificial Intelligence

(attempts to emulate human intelligence in the computer), WCs works alongside

the human, both doing what each is better at.

After a few cycles

of evolution, the wearable computer will become highly ergonomic and a user,

over an extended period of usage, will feel it as a true extension of mind and

body. The combined capability of resulting synergistic whole will far exceed

the “parts”. This will undoubtedly enhance the quality of life of

the user, at work place and in all facets of daily life.

References & Acknowledgements

All images in this article

have been reproduced with consent from original authors, with due acknowledgements

here in the references section.

The

Humane Interface by Raskin, Jef. This recently published book goes into

greater depth about the advantages of quasimodes and the primacy of text over

icons. It also discusses past implementations of the LEAP text navigation method

and is a good summary of evaluation techniques for determining quantitative

user interface efficiency.

Augmented Reality,

Research Projects on Computer Augmented Environment projects at Sony Computer

Science Laboratories, Japan

http://www.csl.sony.co.jp/project/ar/ref.html

A Wearable Application

Integration Framework, Neill J Newman, University of Essex

http://www.cs.washington.edu/sewpc/papers/newman.pdf

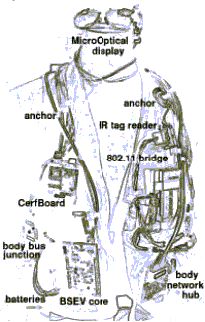

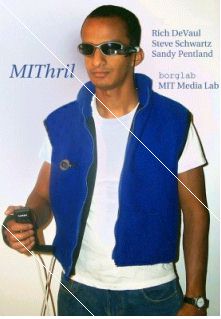

MIThril, research

project at MIT, hardware platform combines body-worn computation, sensing, and

networking in a clothing-integrated design. The MIThril software platform is

a combination of user interface elements and machine learning tools built on

the Linux operating system

http://www.media.mit.edu/wearables/mithril

Linux Wearable,

work being done in the world of linux for enabling wearable technologies

http://www.linuxdoc.org/HOWTO/Wearable-HOWTO.html

Steve Mann,

Univ of Toronto, Keynote address on “Wearable Computing as means for personal

empowerment”

http://wearcam.org/wearcompdef.html

Ripley Wearable

Computer, Commercial wearable products currently available on linux

http://www.zerospin.com/ripley/index.html

Proceedings of

The Second International Symposium on Wearable Computers (ISWC '98), 1998

Thad Starner, Brent Schiele, and Alex Pentland. Visual contextual awareness

in wearable computing

Wearable Computing,

Patrick Sinclair, Intelligence, Agents and Multimedia Group Department of

Electronics and Computer Science, University of Southampton

5th International

Symposium of Wearable Computers, 2001, Zurich, http://www.iswc.ethz.ch

Wearable Computer

Systems at Carngie Mellon University

http://www.cs.cmu.edu/afs/cs.cmu.edu/project/vuman/www/home.html

Computer-Augmented

Vision Technology, http://www.cs.unc.edu/~us

Kitty Tech, Keyboard Independent Touch Typing Method by Dr Karsten Mehring, http://www.kittytech.com/

Xybernaut, Manufactures

of wearable computers, HMD.

http://www.xybernaut.com/newxybernaut/home.htm

Origin Instruments Corpn. - Input devices, http://orin.com/index.htm

Virtual Vision: Head Mounted Displays, http://www.virtualvision.com

Kopin Corpn:

Display devices, http://www.kopin.com

i-Glasses: Head

Mounted Display Units, http://www.i-glasses.com/Store/SVGA.php3

Mobile Augmented Reality Systems (MARS), (c) Columbia University Computer Graphics and User Interfaces Lab.

MIT Media Lab Rich DeVaul Alex "Sandy" Pentland Steve Schwartz

(Article dated: Oct' 2002)